How End-to-End Observability Breaks Silos and Transforms Application Performance

It’s 3 AM. Your payment service is down. Revenue is bleeding. Your DevOps engineer stares at Prometheus dashboards showing CPU spikes. Your application developer digs through Elasticsearch logs pointing to a database timeout. Your security analyst flags anomalous API traffic in Splunk. Three teams, three tools, three different stories about the same incident.

The problem? You’re not looking at a technical failure. You’re staring at the consequences of fragmented observability, where data silos turn every production incident into a war room guessing game.

Welcome to the reality of modern distributed systems: microservices spanning multiple clouds, ephemeral containers that live for seconds, and AI-powered data pipelines generating telemetry at unprecedented scale. Traditional monitoring wasn’t built for this world. And the gaps are costing you time, money, and sleep. End-to-end observability isn’t just the next evolution of monitoring. It’s a fundamental rethinking of how teams understand, troubleshoot, and optimize complex systems, by breaking down the walls between development, operations, and security.

Why Traditional Monitoring Fails in Modern Systems

Traditional monitoring was designed for a different era, when applications lived on physical servers, architectures were monolithic, and “distributed” meant a load balancer and two VMs. That world is gone. The architecture shift broke monitoring assumptions. Modern cloud-native systems run on ephemeral infrastructure where containers spin up and disappear in seconds, services communicate across multiple cloud providers, and a single user request might touch 20+ microservices before completing. Your monitoring stack? Still built for static environments.

Tool sprawl creates observability debt. Organizations today average 5-10 separate monitoring tools: Prometheus for metrics, ELK or Splunk for logs, Jaeger for distributed traces, separate security monitoring, APM tools for application performance. Each tool collects its own data in its own format with its own storage backend. The result isn’t comprehensive visibility, it’s data fragmentation at scale.

Context blindness kills velocity. When an incident hits, your teams know what broke. Metrics show the symptom. But understanding why requires manually correlating logs from one system, traces from another, and metrics from a third. Engineers waste 40-60% of incident response time just gathering context instead of fixing problems. When every second counts, this context-switching tax is unacceptable.

The collaboration penalty compounds the problem. Developers optimize for local environments without production visibility. Operations focuses on infrastructure metrics without application context. Security analyzes threats in isolation from performance baselines. Each team builds mental models from incomplete data, leading to misdiagnosis, finger-pointing, and prolonged Mean Time to Resolution (MTTR). Modern systems demand modern observability. The question isn’t whether traditional monitoring is failing, it’s how much that failure is costing your organization in downtime, developer productivity, and competitive advantage.

What End-to-end Observability Really Means in Practice

End-to-end observability isn’t “monitoring with better dashboards.” It’s a unified telemetry architecture where metrics, logs, and traces converge into correlated, queryable context across your entire system, from edge requests to database queries to AI model inference.

The three pillars, reimagined: Traditional observability taught us to collect metrics, logs, and traces separately. An end-to-end observability platform flips that model: these signals are collected, stored, and analyzed together from the start. When latency spikes in your API gateway, you don’t hunt through three tools. You query once and see the metric spike, the error logs from downstream services, and the distributed trace showing exactly which database call timed out, all correlated by request ID, timestamp, and service topology.

OpenTelemetry as the unifying layer: In 2025, 79% of organizations either use or are evaluating OpenTelemetry, the open standard that’s become the backbone of modern telemetry collection. OpenTelemetry’s value proposition is simple but transformative: instrument once, export anywhere. Instead of vendor-specific agents fragmenting your data pipeline, OpenTelemetry provides a cloud-agnostic SDK for capturing metrics, traces, logs, and now profiling data through a single protocol (OTLP). This standardization breaks vendor lock-in while enabling true multi-cloud observability.

Context propagation across distributed boundaries: Modern applications don’t live in one place. A single user transaction might start with an AWS Lambda function, call APIs hosted on Azure, trigger Kafka streams, invoke a machine learning model on GCP, and write results to a data warehouse. End-to-end observability for modern data stacks means tracing that entire journey with complete fidelity, tracking not just service-to-service hops, but data lineage through ETL pipelines, model prediction latency in AI systems, and queue depths in event-driven architectures.

AI-driven intelligence, not just data collection: Collecting telemetry is table stakes. The breakthrough in end-to-end observability platforms is automated analysis: AI that learns normal system behavior, detects anomalies across all three signals simultaneously, and suggests root causes before engineers even look at a dashboard. When your Kubernetes cluster shows memory pressure while application error rates climb and traces reveal slow database connections, AI correlation engines connect those dots instantly, turning observability from descriptive to prescriptive.

The modern data stack demands modern observability: If your architecture includes Kafka, Airflow, dbt, Snowflake, or ML pipelines running PyTorch or TensorFlow, legacy monitoring can’t follow the data. End-to-end AI observability extends telemetry into training jobs, feature stores, model serving endpoints, and inference latency, giving data scientists and ML engineers the same operational visibility that SREs have long demanded for traditional applications.

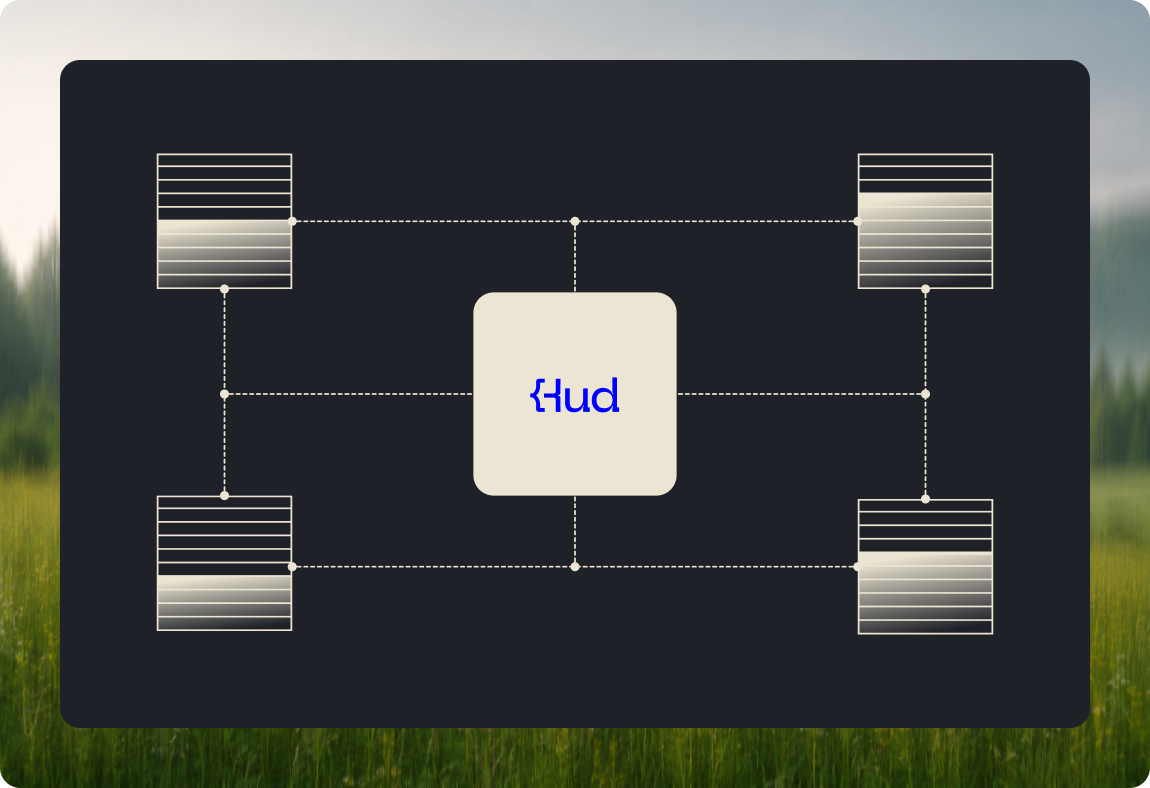

An end-to-end observability platform isn’t a single tool: It’s an architectural pattern: unified telemetry collection, shared data models, cross-signal correlation, and a single pane of glass where every team sees the same truth. The goal isn’t more dashboards, it’s less time to insight.

Breaking Down Silos Between Dev, Ops, and Security Teams

The technical architecture isn’t the only thing fragmented in most organizations, it’s the teams themselves. Siloed observability tools create siloed mental models, and siloed mental models turn every incident into an interdepartmental blame game.

Development lives in “works on my machine” isolation: Developers optimize code in local environments and staging clusters with limited production telemetry access. When issues surface post-deployment, they lack the performance baselines, real user traffic patterns, and infrastructure context needed for rapid diagnosis. The result: “Can’t reproduce locally” becomes the default response, while operations scrambles to gather stack traces and heap dumps that developers could have instrumented proactively.

Operations sees infrastructure without application context: SREs monitor CPU, memory, network throughput, and pod restarts. But when Kubernetes shows resource saturation, which application behavior triggered it? Was it a sudden traffic spike, a memory leak in new code, or a misconfigured autoscaler? Without correlated application traces and business metrics, operations can only describe symptoms, never root causes.

Security operates in a parallel universe: Security teams analyze threat patterns, suspicious authentication attempts, and anomalous API calls through dedicated SIEM platforms Meanwhile, DevOps treats performance and security as separate concerns. The disconnect is dangerous: a latency spike might indicate a DDoS attack, but if security and performance data aren’t correlated, you’re fighting two separate fires instead of recognizing one coordinated threat.

End-to-end observability creates shared truth: When all teams query the same unified platform with the same correlated telemetry, the conversation shifts from “whose tool is right?” to “what’s actually happening?” A single trace now carries application metrics, infrastructure resource usage, and security context markers, visible to everyone. Developers see production behavior in the same interface where SREs monitor uptime and security analysts track authentication anomalies.

DevOps unification through shared visibility: The DevOps movement promised collaboration, but siloed tools sabotaged the vision. End-to-end observability delivers on that promise: developers get production-grade observability in staging environments using identical instrumentation, meaning issues caught pre-deployment instead of at 3 AM. SREs gain code-level visibility through distributed traces and profiling, empowering them to file actionable bug reports instead of vague “the app is slow” tickets.

DevSecOps: embedding security into the telemetry layer: In 2025, leading organizations don’t bolt security onto observability, they embed it from day one. When observability platforms ingest authentication logs, API access patterns, and dependency vulnerability scans alongside performance telemetry, security becomes proactive. Anomaly detection spots credential stuffing attacks because they correlate with latency spikes and failed login traces. Threat hunting happens in the same tool used for debugging, enabling security engineers to understand attack impact on application performance in real time.

From blame to ownership: the cultural shift: The real transformation isn’t technical, it’s organizational. When teams stop swivel-chairing between tools and start collaborating in shared dashboards, incident post-mortems change tone. Questions shift from “Who deployed the bad code?” to “What pattern did we miss, and how do we instrument better next time?” Shared observability creates shared accountability, reducing MTTR not just through better tools, but through better teamwork. End-to-end observability doesn’t just connect data, it connects people.

The Transformation Impact

Moving to end-to-end observability isn’t an incremental improvement, it’s a step-function change in how organizations operate.

Performance gains become measurable and repeatable: Organizations report 50-70% reductions in Mean Time to Resolution after adopting unified observability platforms. The math is simple: when engineers spend 10 minutes getting to root cause instead of 45 minutes correlating data across tools, incident response transforms from reactive firefighting to systematic problem-solving. AI-powered anomaly detection shifts the model further, catching issues before customers report them, sometimes before they even impact production.

Security moves from reactive to predictive: When security telemetry integrates with performance baselines, threat detection gets smarter. A spike in failed authentication attempts correlated with increased API latency and geographic anomalies in request traces isn’t just suspicious, it’s actionable intelligence. Security teams using unified observability platforms detect breaches 60% faster because they’re not reconstructing attack chains from fragmented logs. The entire attack surface becomes observable: from code dependencies with known CVEs to runtime behavior indicating zero-day exploitation attempts.

Cost optimization replaces cost explosion: Tool sprawl isn’t just an operational problem, it’s a budget killer. Multiple overlapping monitoring solutions mean multiple vendor contracts, multiple training programs, and multiple integration maintenance burdens. End-to-end observability consolidates that spend while delivering better outcomes. Additionally, intelligent data management, sampling high-volume traces, compressing low-value logs, retaining only anomalous metrics, cuts storage costs by 40-60% compared to “collect everything, ask questions later” approaches.

Operational efficiency compounds over time: The benefits aren’t one-time. Shared observability enables continuous improvement: developers instrument code better because they see production impact immediately. SREs build more accurate capacity models because they understand application behavior, not just infrastructure utilization. Security teams harden attack surfaces because they visualize exploitable paths through distributed traces. The feedback loop tightens, and system reliability improves exponentially, not because of heroic effort, but because everyone has the data they need to make better decisions.

The transformation isn’t just technical. It’s strategic. Organizations that treat observability as end-to-end infrastructure, not as a collection of point solutions, ship faster, sleep better, and outmaneuver competitors still debugging in the dark.

The Path Forward

The question isn’t whether your organization needs end-to-end observability, it’s how long you can afford to operate without it.

As systems grow more distributed, architectures more complex, and customer expectations more demanding, fragmented visibility becomes a competitive liability. The organizations winning in 2025 and beyond aren’t the ones with the most monitoring tools, they’re the ones with unified telemetry architectures that turn data into decisions faster than their competitors can even diagnose problems.

Start by assessing your current observability maturity. How many tools do your teams use during incidents? How long does it take to correlate a metric spike with the code change that caused it? How often do dev, ops, and security teams work from conflicting data? The answers reveal your readiness gap.

The future of observability is already here: OpenTelemetry standardization, AI-driven root cause analysis, security-integrated platforms, and modern data stack visibility that extends from edge functions to machine learning pipelines. The only question is whether you’ll adopt these capabilities proactively, or reactively, after the next major outage forces your hand.

Modern systems demand modern observability. It’s time to break the silos.

Frequently Asked Questions

How is end-to-end observability different from standard observability tools?

Standard observability tools collect metrics, logs, or traces independently, requiring manual correlation across separate platforms. End-to-end observability unifies all three signals with shared context from the moment of collection, enabling you to query across metrics, logs, and traces simultaneously. Instead of jumping between Prometheus, Elasticsearch, and Jaeger, you see correlated telemetry in one interface, drastically reducing time to insight during incidents.

Does end-to-end observability require replacing existing monitoring systems?

Not necessarily. Modern end-to-end observability platforms integrate with existing tools through standards like OpenTelemetry, allowing gradual migration rather than rip-and-replace. You can start by instrumenting critical services with unified telemetry while keeping legacy systems operational. Over time, organizations typically consolidate tools to reduce operational overhead, but the transition can be phased based on team readiness and business priorities without disrupting current workflows.

How does observability support cloud-native security strategies?

Observability provides the visibility layer that modern security strategies demand. By correlating authentication logs, API access patterns, and distributed traces in real time, security teams detect threats faster and understand attack impact immediately. Cloud-native security requires understanding service-to-service communication, lateral movement patterns, and anomalous behavior across ephemeral infrastructure, all impossible without comprehensive, correlated telemetry that observability platforms deliver. DevSecOps becomes operational, not aspirational.

Can end-to-end observability reduce incident resolution time?

Absolutely. Organizations adopting unified observability platforms report 50-70% reductions in Mean Time to Resolution (MTTR). The improvement comes from eliminating context-gathering overhead, engineers no longer waste time manually correlating data across fragmented tools. AI-driven root cause analysis accelerates diagnosis further by automatically connecting anomalies across metrics, logs, and traces. Faster incident response means less downtime, lower revenue impact, and fewer middle-of-the-night emergency pages.

Is end-to-end observability more relevant for large or distributed teams?

While large distributed teams benefit significantly from breaking down silos between dev, ops, and security, end-to-end observability delivers value at any scale. Small teams often lack resources to maintain multiple monitoring tools, unified platforms reduce operational burden dramatically. Distributed teams across time zones gain async collaboration through shared dashboards showing consistent truth. However, organizations running microservices, multi-cloud infrastructure, or complex data pipelines see outsized returns regardless of team size.